How Generative AI Pushes

Data Streaming to the Next Level

Author: Sam Ward

Release Date: 02/03/2026

Understanding AI: Discriminative AI - The Librarian

Artificial Intelligence. Most of us use it in our daily lives, potentially without even realising it. That Alexa in your home – the one you use to set reminders, check the weather, or ask deep questions like 'is my blue the same as your blue?' – that’s AI. Granted, Alexa won’t actually be able to breach the subject of Qualia (which is more of a philosophical question, and is defined as the subjectivity of an experience for each individual) and this is because these assistants work off of something called Discriminative AI, which is where we have models to sort and organise data, and works by essentially take a voice command and select the appropriate response based on what it interprets what you’re asking for.

For example, if I ask Alexa “Hey Alexa, set a timer for 5 minutes”, it’ll start processing upon hearing the wake-up word (Alexa) and process the voice command into text, then look for the key buzzword or intent phrase (timer) and any additional information/intent (5 minutes) and we’ll end up with a timer set for 5 minutes. These steps together, from a basic voice command, form a precise instruction built on Discriminative AI. Instead of creating something new, our system (or rather Amazon’s) is just following a series of steps to give us an output.

This is where we step into Generative AI.

Understanding AI: Generative AI - The Creator

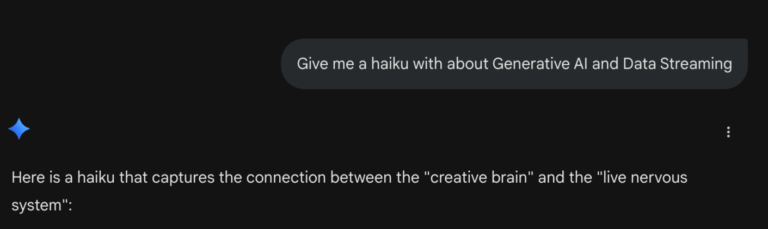

As we stated, while Discriminative AI is used to organise and find data, Generative AI is used to create completely new content, such as: text responses to philosophical questions, images, code and videos. While the ethicality of AI (specifically Gen AI) is something to consider, we won’t go down that rabbit-hole in this blog. Instead, I’ll show you an example of using Gen AI, and task you with entering the exact same prompt into whatever model you currently use, be it Gemini, ChatGPT, Claude etc. Here’s a link to Gemini if you can’t decide!

Do you get the following:

Events move in streams,

Feeding models what they need,

Real-time truth arrives.

Or is your answer different? Chances are, and the chances are pretty big, that you get a completely different haiku.

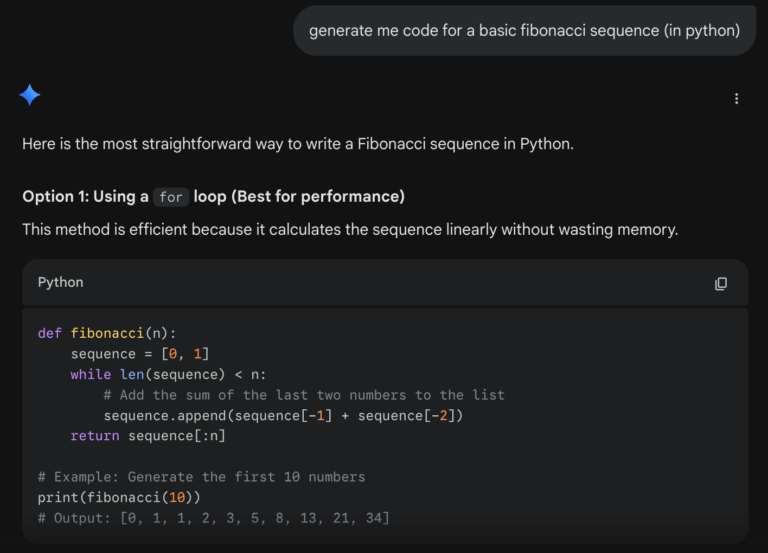

Now, a Haiku is incredibly basic - it’s a simple poem following a very simple pattern of 17 syllables (5-7-5). What happens when we need something more complicated? We’re not really saving THAT much time here making a Haiku, so what happens when we swap to something a little more related to the field we’re interested in: let’s generate some code.

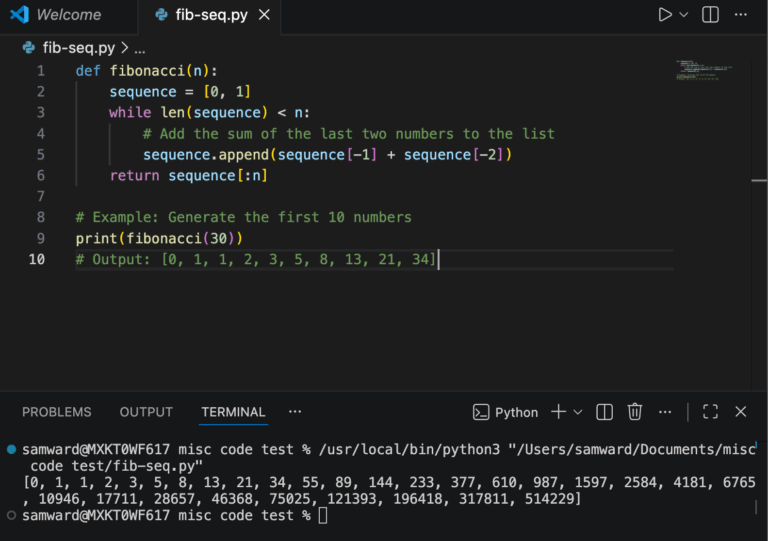

Now, we can start seeing a little bit more value. But don’t take Gemini’s word for it, let’s test this in VSCode and see that it actually works:

When we cross reference this, we see the values are correct - nice! While we don’t necessarily have to check the LLM’s solution for tasks this simple, things can get a little harder when it comes to more specific requests. This is where we come on to the idea of Hallucinations. Sometimes, when Gen AI has old information and uses logic to fill in the gaps, giving an answer or solution that seems correct, but is actually factually incorrect. This is because, by nature, LLMs are trained on huge amounts of data from the internet, and this includes both correct and incorrect information.

The Link Between Generative AI and Data Streaming

In a post by The New York Times, it notes that “by pinpointing patterns in that data, an L.L.M. learns to do one thing in particular: guess the next word in a sequence of words”, and that these LLMs/Chatbots “produce new text, combining billions of patterns in unexpected ways. This means even if they learned solely from text that is accurate, they may still generate something that is not.”

But this doesn’t mean that Generative AI is doomed, eternally stuck in a cycle of being polluted by new data and never able to fully determine whether the new data it’s trained on is valid, quite the contrary. This is where we can start thinking about how Data Streaming could possibly come into play. Let’s say we’ve got a cybersecurity stream/system that is checking for potential threats/issues with logins - if we have a user logging in in London/England at 1PM, and 10 minutes later, we have the exact same user login in Perth/Australia. This should be flagged, and the account locked. But what if the problem is more complicated? What if an employee’s laptop is stolen, or someone is hacked in the same city or the hacker uses a VPN?

This is where Data Streaming and Confluent can fix the issues we might have with traditional systems, and solve a major limitation of current security. A traditional system might be able to detect this impossible travel and lock the account, but is a blunt instrument. It’ll stop the action and give a scrap of information, such as “Login Impossible: Account Locked - Distance (London/Perth)” - but this isn’t enough in this modern era of Cybersecurity and Cyber Attacks. We need more.

We need the context behind what’s happening. Rather than performing an autopsy on a breach that’s already happened/existing data stuck in a traditional database or scraping through logs or traces, we can feed live information using Confluent (every login, file accessed, command run in cmd and every system change) into an LLM, which can help us transform our system into a dual-wielding analytical demon of a gatekeeper rather than a silent protector. Now, our Generative AI tool can analyse the stream of events related to this user in question, and not only recognise the impossible leap, but the behaviour of the user as well to generate us a comprehensive security brief that says: “Account Locked (Impossible Travel Detected!!) - additional notes, login attempted to bypass MFA using a known session-hijacking pattern previously seen in this example exploit, here’s a blog that explains this and how to fix it”.

Now, back to Hallucinations. We’ve now got an antidote - but to understand how it works, we need to think about why an AI hallucinates: it’s trying to make a best guess based on old information. It might hallucinate a generic response because it’s not been trained on a specific event yet, and it’s got old data - this is where we end up with confident, but WRONG answers. We can use Confluent to provide a constant stream of information, and using this, AI doesn’t have to guess - it can see the exact metadata and data of your business operations as they happen and it now doesn’t have to imagine what your current inventory levels are, or what your system logs look like because you’re already giving them the context it needs to now accurately answer these questions it may have once gotten incorrect. This gap in logic can now be bridged, and hallucinations reduced and replaced with fact-based analysis of the latest data.

This is the beauty of the marriage of Generative AI and Data Streaming Platforms. The idea of informing LLMs with a continuous source of fresh information is what transforms a basic cybersecurity system into a real-time analytical decision engine. If you’re familiar with Confluent, you’ll know about certain concepts like Data at Rest and Data in Motion, and how Confluent bridges the gap between them, and by using it alongside Generative AI, we reduce the risk of Hallucinations, and improve the reliability of the systems we use.

But you may be asking, “Hmm. That’s not entirely what I was looking for. How does Generative AI better my Data Streaming, how does it benefit Confluent, and how can it serve me rather than my data helping train Gen AI better?”

Let’s dive into that, and learn how they can both lift each other up to new heights.

How Generative AI Can Push Data Streaming to the Next Level

So, we’ve established that Data Streaming can be utilised to improve Generative AI, but Generative AI can give back, and Confluent is just starting to use it. Confluent has an AI Assistant that is available as a preview feature (not in General Availability (GA) yet, so you may not be able to use this or see it, with only a select few organisations able to use it). This AI Assistant, which has been trained on every page of Confluent Documentation and every Confluent Support Article (as well as being naturally aware of Confluent Cloud resources the user has access to) will be the perfect tool to help provide you with the exact answers to your problems, be it coding issues, Flink queries not working, issues with RBAC, you name it!

Have a look at the Confluent Documentation for Confluent AI Assistant here: https://docs.confluent.io/cloud/current/release-notes/cflt-assistant.html

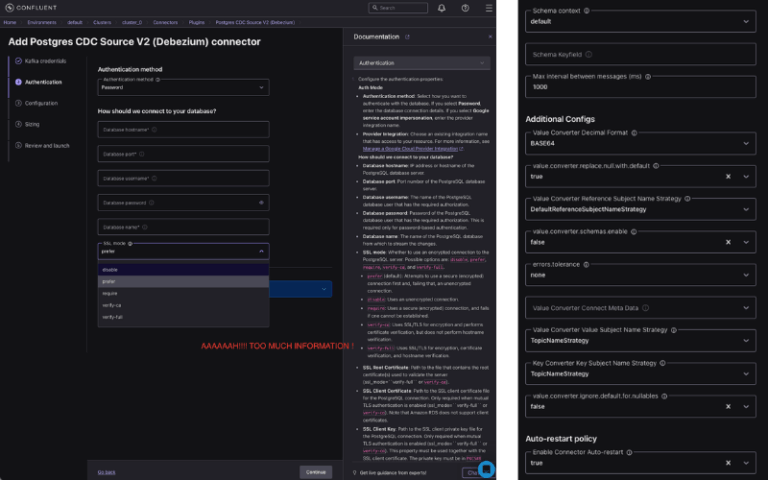

While it’s still early on in the development of this assistant, there’s huge potential here: let’s say you want to add a new connector - what are the steps currently? Well, you have to first navigate to your Environment, then select a Cluster, then to Connectors and follow a long workflow and enter a lot of information about where your data is going to be coming from. It can be quite a lot, especially for someone not too familiar with Confluent and the specifics for your data source or data sink.

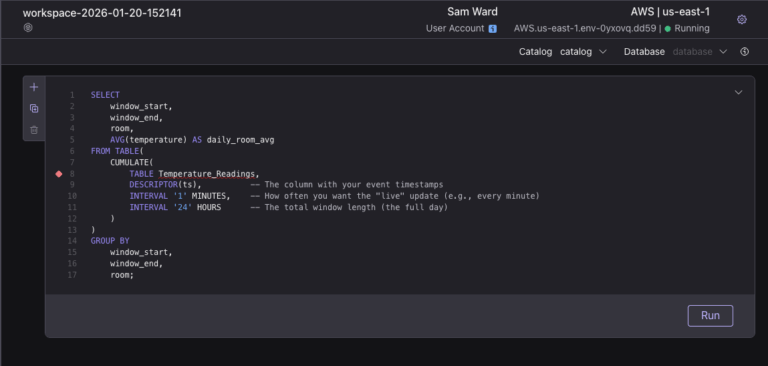

With Generative AI, it could be as simple as asking the AI Assistant: “Hey Assistant, make a stream from my existing source Temperature_Readings, create this new stream called Temperature_Daily_Average that uses the Readings table to calculate a live average off previous data, and put the final values at the end of each day (23:59:59) into my existing MySQL sink as a new table” - now, this is a lot of words but the end result would be us setting up a flow of data, a new stream, and connecting all of this up using Flink and our existing sources/sinks without having to enter any code, or manually set up any of this personally. What would the query look like? It can’t be THAT complex you may be asking?

Well, you can be the judge of that - here’s a look at what that query MIGHT look like:

We're still a bit of a way away from this. You may be looking into researching this yourself, and be knocked off your feet by the sheer amount of articles and confusing blog posts on Confluent’s site, or maybe Gemini or your favourite tool is giving you conflicting information. But here’s the facts:

• You can’t use their Generative AI Assistant yet, but it will be coming, whether it’ll be free for all users or paid it’s still unknown

• You CAN provide existing Gen AI models like Gemini to help write Flink Queries after providing it context

• You CAN leverage existing Gen AI models (specifically Cursor, which is an AI coding assistant) along with Model Control Protocol (MCP) to query relevant Kafka topics using natural language - see the blog here: https://www.confluent.io/blog/querying-kafka-natural-language/

We’ve gone from talking about Alexa timers to complex Data/Flink Pipelines. It might feel like a huge leap, and you might be a little lost with some of the technical jargon, and that’s totally understandable. While we aren’t at the fully autonomous stage yet (where AI builds it all for us), the shift is undeniable and on its way. Today, you might be struggling with behemoth SQL queries and problems with Data Duplication across multi-joins, or Flink is wrestling in over on Confluent Cloud, but tomorrow your role could instead be explaining the context and intent behind the data, and that’s where we’re heading. The time for just processing data is over; it’s time to be an orchestrator of data!